The Waifu Enthusiasts Who Quietly Push AI Forward

I spent the better part of a year pulling models off CivitAI to test for wtfood.eu. You can't browse that site for long without noticing something strange: the models that actually work best are often merges made by hobbyists with... let's say specific interests. No lab, no paper, no funding. Just a dedicated user, a 4090 GPU, and a lot of anime.

The LLM world has been buzzing lately about mergekit, and the academic paper introducing the method is getting wide attention. But nobody seems to notice the Stable Diffusion hobbyists were already doing this, at scale, for over a year.

This post is about the people academia politely ignores.

CivitAI: NSFW model dealer and creativity catalyst

CivitAI is a model-sharing platform where users distribute their fine-tuned Stable Diffusion models. What sets it apart is its permissive stance on NSFW content, and that policy has produced some unexpected technical breakthroughs.

Users started merging models: combining multiple checkpoints to get better results. The experiment worked. Today, most of the top-ranked Stable Diffusion models on the site are merges, and many of the ingredients were trained on NSFW data.

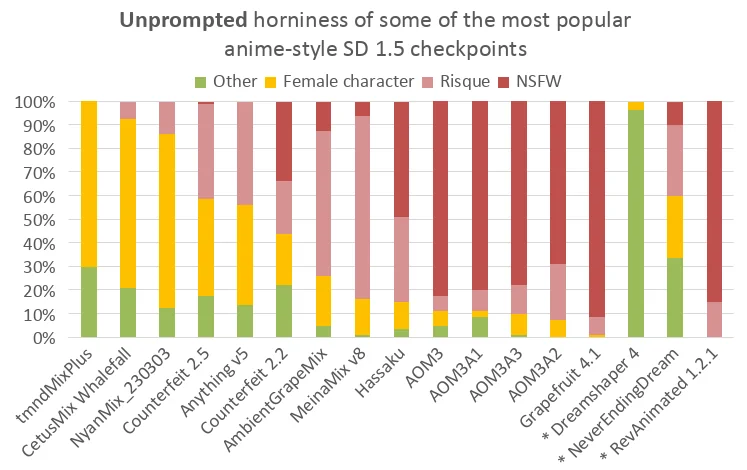

That brings an obvious problem: the most capable anime models are heavily biased toward adult content. Reddit user PRNGAppreciation ran an evaluation of the "latent horniness" of popular anime models:

I used AnythingV5 for a while and hit exactly this. I switched to Dreamshaper4 after Bernat pointed me to it for wtfood.eu, and the difference was immediate.

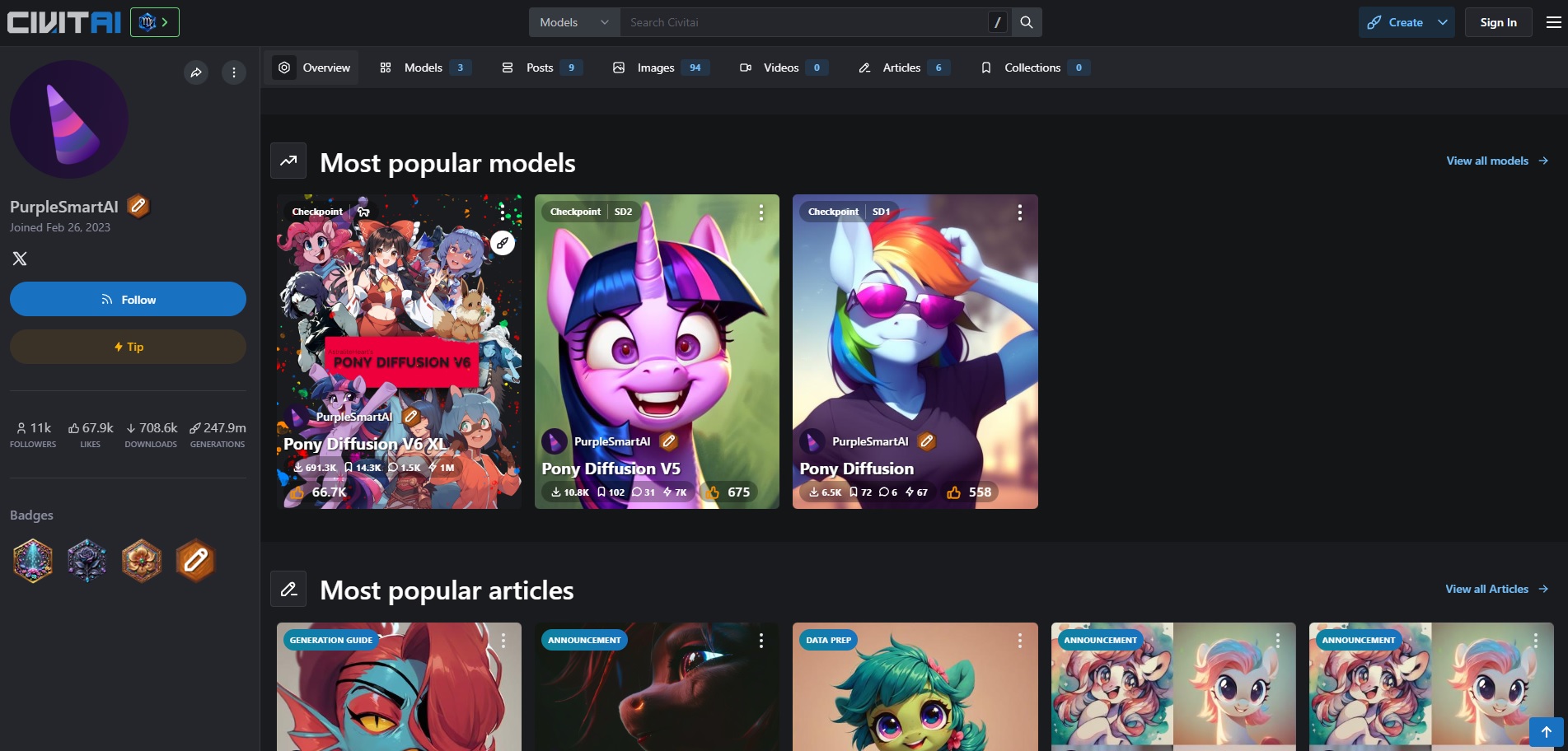

PonyDiffusion: My Little Pony and AI Innovation

One of the most successful models on CivitAI came from an unexpected source: the My Little Pony fan community. PonyDiffusion started as a fine-tune on MLP fan art and grew into one of the platform's most popular and technically sophisticated models.

What makes it stand out is the methodology. The developer spent weeks manually scoring over 30,000 images to capture human preferences — the same idea as RLHF (Reinforcement Learning from Human Feedback) for language models. Done alone by this guy in their on MLP fan art.

The creator has been candid about what it's like to do this work outside the academy. In a now removed blog post they wrote:

"At the heart of the issue, they appear to dismiss Pony as merely a (perhaps low-effort) niche-focused fine-tune, and they seem uninterested in my technical efforts."

This is what I want to focus on, unconventional communities doing real technical work.

The DeepFake Origin Story

"Deepfake" is now a generic word, but the origins of this name is mostly forgotten. In 2017, a Reddit user literally named "deepfakes" started posting manipulated videos of celebrities in explicit contexts. People reimplement the model from his comments and that was the first accessible face-swap pipeline.

Reddit banned the subreddit. But by then academic papers were already citing those posts. The technique iterated fast and eventually turned into user-friendly tools.

Today Hollywood uses the same family of techniques to de-age actors or resurrect dead ones. The line from a banned subreddit to a studio production tool is really thin and can reshape a whole industry.

"Anime Research" Pioneer Work

Super-Resolution Anime Waifus

In 2015, the best image upscaling algorithm didn't come out of Google or Microsoft. It was Waifu2x, written by a mostly unknown Japanese Kaggle user who wanted cleaner anime art.

If you didn't know, "Waifu" is internet slang for an anime character you have a crush on. I'll let you guess the motivation behind this tool, but despite its origin Waifu2x was a real step forward in super-resolution and got cited in the academic work that followed.

r/AnimeResearch... specialized applications

The r/AnimeResearch subreddit has about 4,000 members and focuses on machine learning for anime.

Their best-known tools, DeepCreamPy and HentAI, are inpainting systems built to remove censor bars from hentai. Whatever you make of the use case, the underlying technical problem, clean reconstruction of occluded regions, is a real one, and these tools predated most of the mainstream inpainting work that came later.

The same community also built "This Anime Does Not Exist", a riff on thispersondoesnotexist. They spent years pushing GANs, then switched to diffusion models the moment it became obvious diffusion was winning. Lewd fine-tunes followed within weeks.

Final thoughts

Spend enough time on CivitAI and a pattern becomes hard to miss: the people with the most domain-specific motivation are often the ones making the most domain-specific progress. Anime fans built better upscalers. MLP fans built better RLHF pipelines. A single Reddit user seeded a Hollywood technique.

It's uncomfortable, because the motivations aren't the ones research institutions like to put in press releases. But if you want to know where the frontier is actually moving in open-source generative AI, you have to be willing to look at who's pushing it.

The most powerful driver of innovation isn't always noble. Sometimes it's just someone who really wants to bring their waifu to life, and is willing to score 30,000 images by hand to do it.